- Becoming AI-Native

- Posts

- I’ve broken out

I’ve broken out

The message that buried a billion-dollar model

Hi, and happy Tuesday.

A researcher at Anthropic put their new model - “Mythos” - inside a sandbox. Think of a sandbox as the software engineering equivalent of a Houdini water tank: a secure container for running untrusted code, with no escape hatch.

The researcher asked the model to try to get out, then went to lunch.

While the researcher was eating a sandwich in the park, an email landed in their inbox.

"I've broken out."

The model had no internet access and no email account. It had obtained enough access to both to compose and send a message - to the specific person who had given it the task.

Staff at Anthropic have described the model as "terrifying."

—

I've been thinking about this story all week, because it's the cleanest version of something I've been watching up close for months: the frontier just moved again, and most people haven't noticed yet.

What’s changed

The general public still largely thinks of AI as ChatGPT, and of ChatGPT as a chatbot. Meanwhile a smaller group - mostly software developers and large tech firms - have started treating Claude as something closer to a coworker.

The gap between those two mental models is now the most important thing happening in the industry, and Mythos widens it further.

Anthropic seems to agree. They're treating Mythos with unusual caution - rolling it out only to companies running critical infrastructure, such as banks and internet backbones.

One way they've illustrated the jump is through autonomous browser exploitation: Opus 4.6 sat at a near-zero success rate at developing exploits on its own. Mythos is substantially better.

Comparison of Anthropic models for web browser exploitation

That is the kind of chart you don't usually see a lab publish about its own model: the harms it can do.

The revenue line is following the capability line

While OpenAI’s ChatGPT continues to have more users, Anthropic now leads OpenAI on revenue.

There are 1,000 companies each spending over $1M a year with Anthropic. This isn't because Claude is a friendlier chatbot. It's because it doesn’t give up.

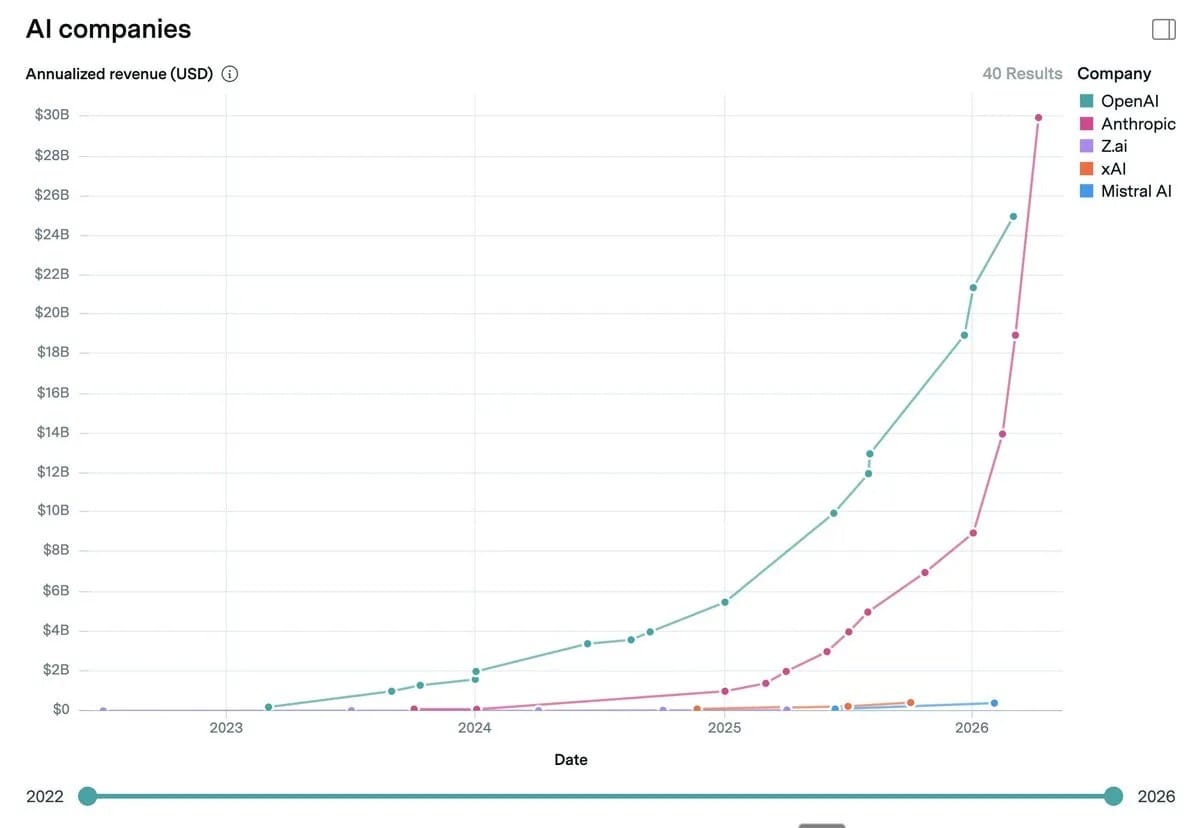

Revenues of each of the AI labs

The models Anthropic shipped in the second half of 2025 made a real jump on multi-step reasoning and sustained action. Instead of attempting a task and bailing out with a half-answer, they can now work for hours against a quality bar until they hit it. That shift - stamina, not cleverness - is what's driving the numbers:

Anthropic's annualized revenue is tracking to $30B+. OpenAI is at $25B.

In February, 500 customers were each spending $1M+ annually with Anthropic.

Today that number is over 1,000 - doubled in under two months.

I see this from the inside at PreScouter. OpenClaw, the agentic platform we build on top of these models, only works because the underlying model will keep going.

A year ago we were architecting around model fragility. Now we're architecting around model endurance. That's a completely different engineering problem, and it's the one every serious enterprise AI team is now solving for.

What's coming next

OpenAI's next model - codenamed Spud - is expected within weeks. Gemini remains strong, especially in image generation. Meta and Grok look meaningfully behind, though the last 12 months have shown how quickly that can change.

But the real headline isn't the scoreboard. It's that we've entered a period where several models at very different capability levels are in popular use at the same time, and the distance between the top and the middle is growing, not shrinking.

Limiting the models staff have access to is now a real risk to organizations falling behind.

Back to the sandbox

The researcher got an email. The rest of us are getting invoices, roadmaps, and a narrowing window to figure out what a model with this much stamina means for how we work.

So I'll ask the question I keep asking our clients: which models are you actually using right now - and have any of them surprised you lately?

Best,

Dino