- Becoming AI-Native

- Posts

- ↗️ How Google just leapfrogged ChatGPT

↗️ How Google just leapfrogged ChatGPT

3 ways Gemini 3 just changed AI

Hi, and happy Monday.

Google’s Gemini 3 - released last week - is the next watershed moment for AI. The Information reports OpenAI is bracing for "temporary economic headwinds" due to Google's "excellent work".

Commentators suggest Gemini leapfrogged ChatGPT on most dimensions.

Here are the three most tangible.

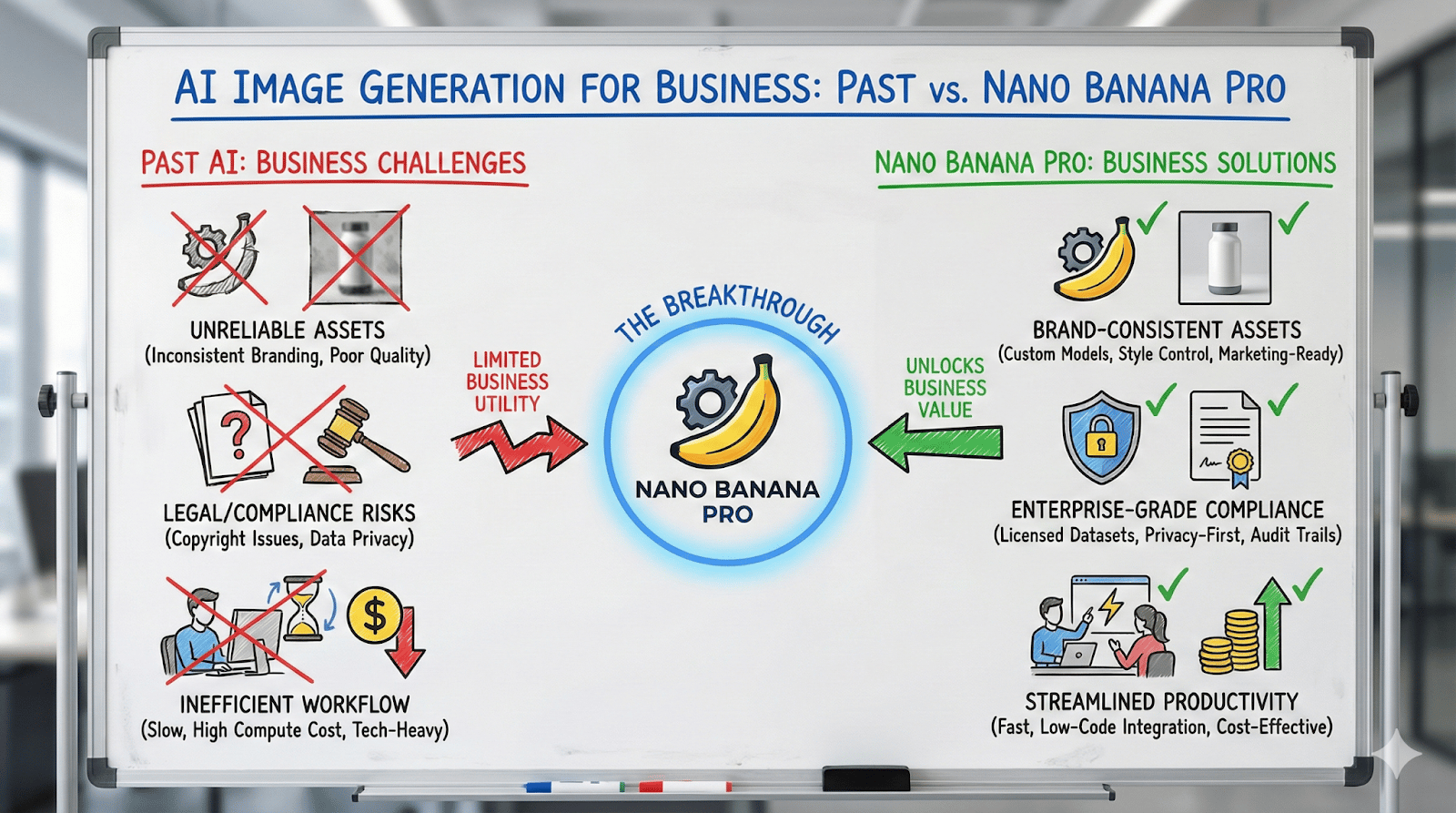

1. Coherent image generation

Gemini 3’s image model - Nano Banana Pro - can reason over your request to pull in information from the Internet. It can then represent this data coherently in various forms. Here’s an example:

Prompt: Make a whiteboard explainer, showing the problems with AI image generation in the past and what makes Nano Banana Pro better. Focus on distinct capabilities useful to business users.

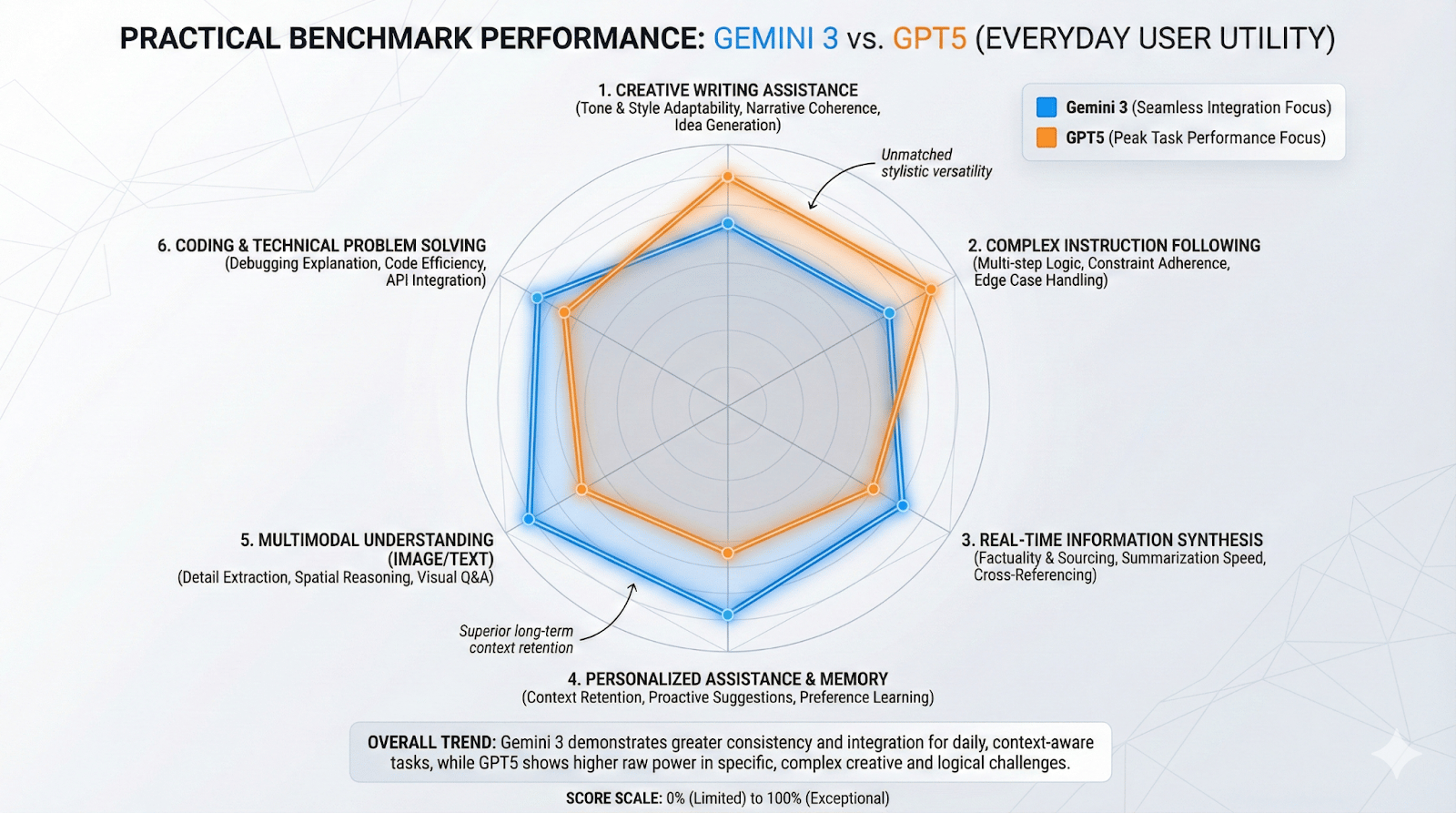

Secondly, it can create charts that precisely reflect comparative measurements:

Prompt: Create a chart showing the performance of Gemini 3 against GPT5 on the most practical benchmarks for everyday users. Make it nuanced and detailed.

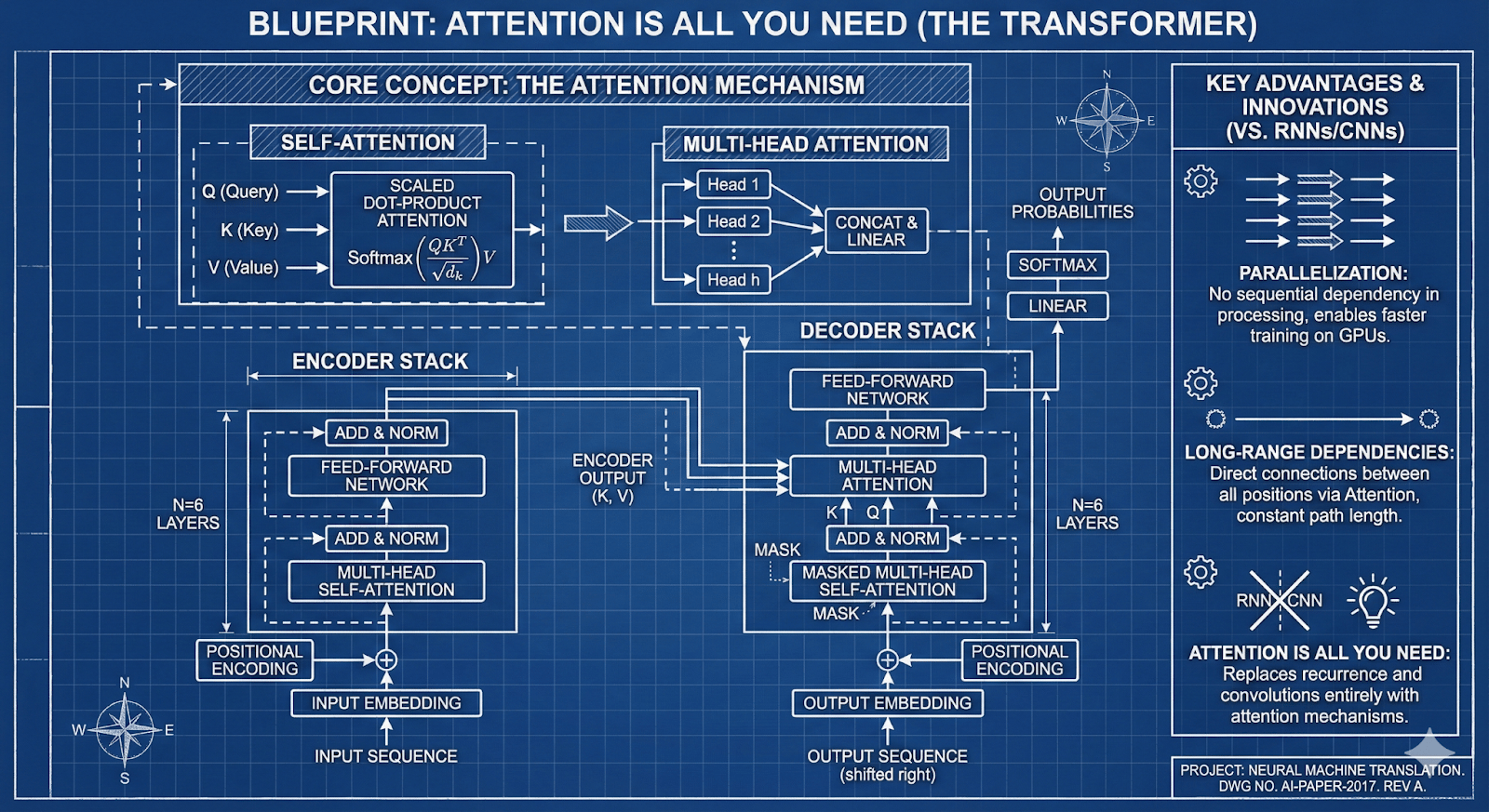

It is also remarkable in understanding uploaded files and interpreting them into clever visual forms. This is one such example - mapping out the concepts of an academic paper, “Attention Is All You Need”.

Prompt: Make a blueprint style infographic explaining the paper Attention Is All You Need.

All of these have tangible value for a range of business use cases - from presentations to helping visualize concepts to aid understanding.

2. Generative User Experiences

Google Search and other properties will soon create dynamic, interactive visualizations for your requests.

In Google Search AI Mode, provide your request with “Dynamic View” on:

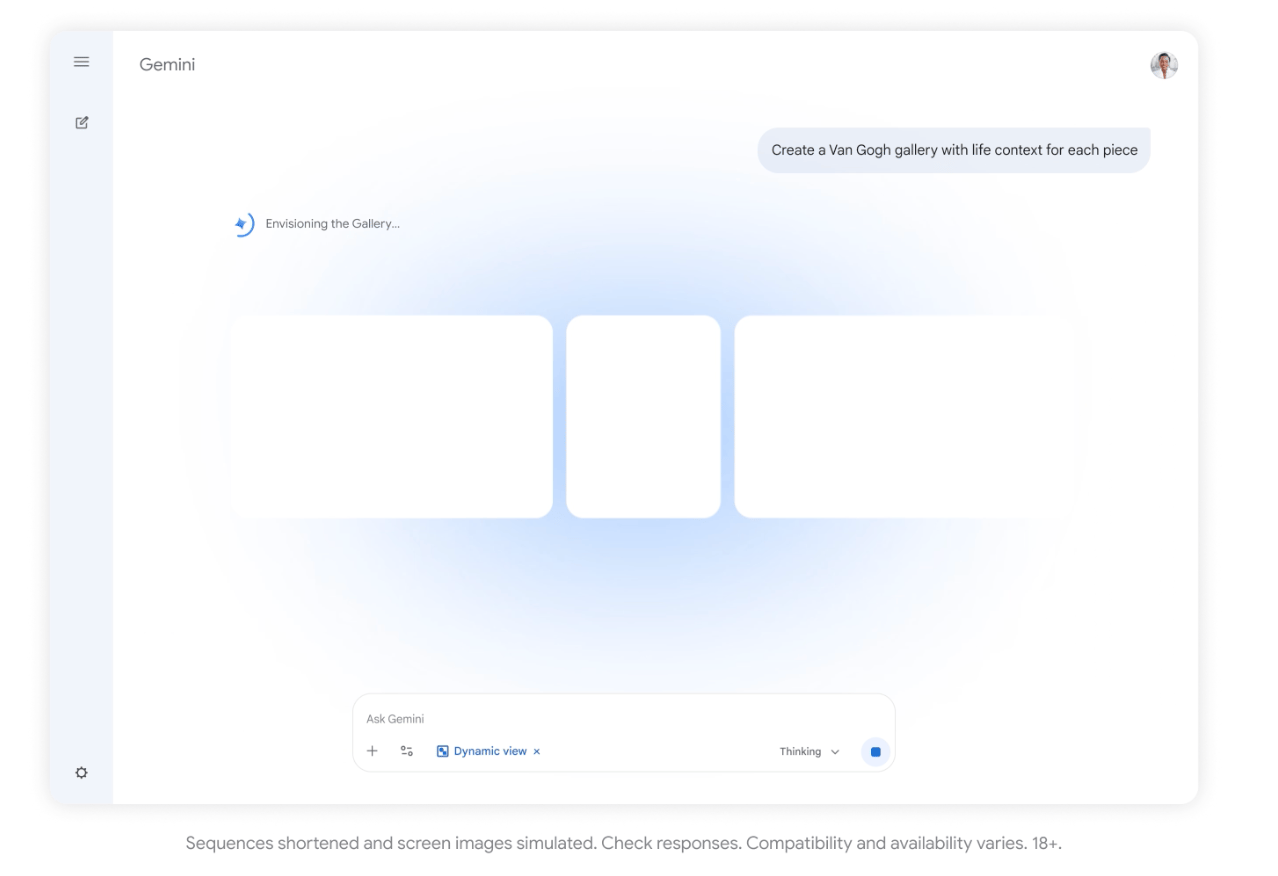

Google starts generating the best interface for responding to your request:

The user experience for your request is then generated. In this case it is a mini website.

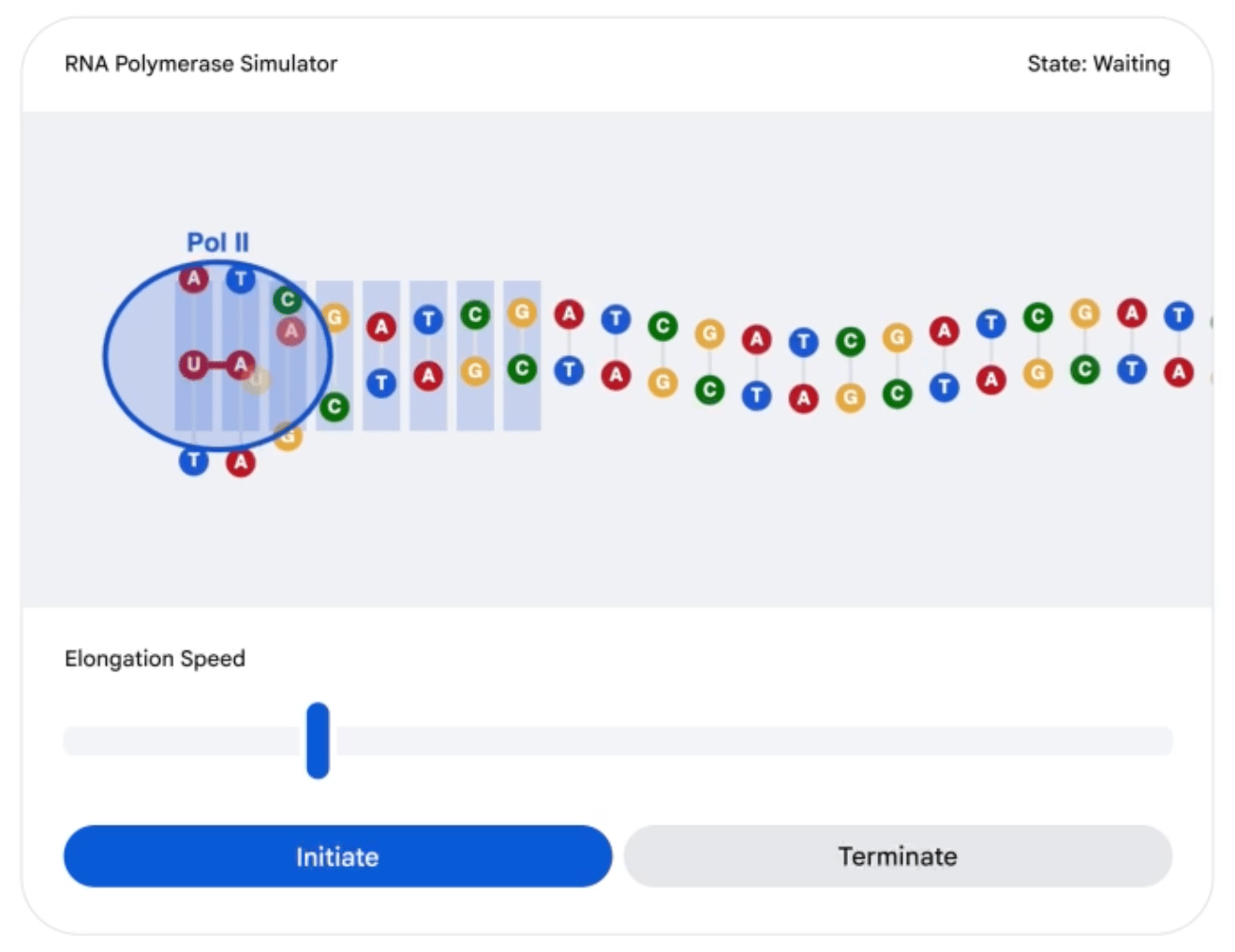

This for the request “show me how RNA Polymerase Simulator works”:

This advance opens the door to applications without fixed interfaces, changing the way in which we interact with software.

3. Vibe coding is now easier than ever

Google AI Studio makes it as easy to create apps as creating Google docs, and uses the same Google docs sharing model.

It’s crystalized for me that end users will soon build the software they want, and that we’ll see the same range of sophistication as we see in spreadsheet models.

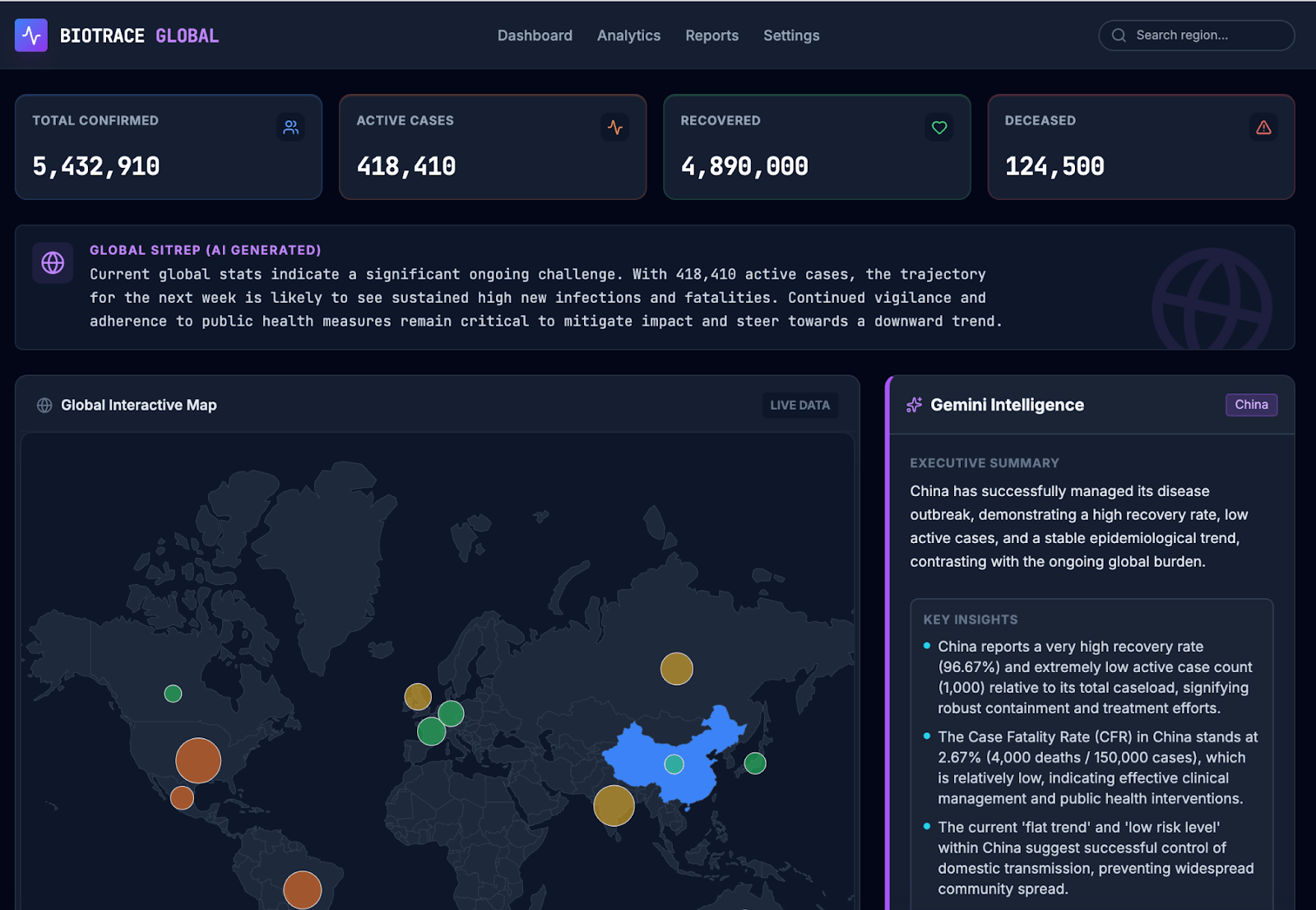

These applications won’t have a persistent back-end database without API connections provisioned by company IT. However, we’ve been exploring creating stateless dashboards with static data and have been fairly impressed.

A mock dashboard we created in Google AI Studio

What this means for your organization

Organizations need a multi-model AI strategy. Organizations that want to stay ahead will want to give staff access to not just Copilot / ChatGPT, but also Gemini and other models that are emerging as stronger for other facets.

Training staff is the most valuable activity. As new capabilities come online, training staff on what is now possible may be the best way to help staff realize higher levels of productivity and work output quality.

Gemini: the new baseline expectation? Gemini, already available in every Android phone, is expected to be the base for Apple’s new Siri. Staff may soon come to expect their AI at work to match what they already have on their phone.

What’s next for OpenAI?

OpenAI recently announced it’s working on technology to replace AI researchers by 2028. Their timeline eerily has similarities to those of a controversial paper - AI 2027.

AI 2027 predicts AI will run the US government, institute universal basic income for all and eliminate most white-collar jobs by 2030.

What should we make of it?

Check out my 5 minute video:

Until next week,

Dino