- Becoming AI-Native

- Posts

- Dozens of AI rollouts. The same 5 problems show up.

Dozens of AI rollouts. The same 5 problems show up.

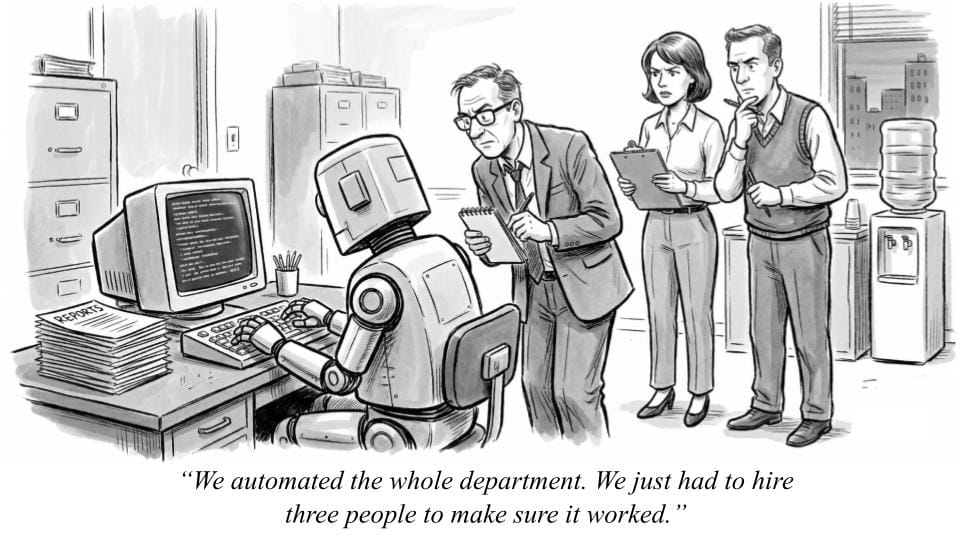

We automated the whole department...

Hi, and happy Tuesday.

At this point, most companies have done something with AI.

They’ve bought the tools.

They’ve run the pilots.

They’ve presented the “AI strategy” slides - usually with a confident arrow pointing up and to the right.

And yet, the actual impact tends to feel… modest.

It’s not quite the “transformation” that C-suite mandated.

We’ve worked with dozens of enterprise teams on AI adoption - from running training programs to full implementations - across organizations ranging from global food manufacturers to regional dental practices.

Across all these industries and levels of maturity, we’re seeing the same quiet realization:

This is harder than it looks!

From our work, five patterns keep showing up.

----

1. The technology moves faster than any organization can absorb

Every few weeks, there’s a new “this changes everything” moment: OpenClaw, Claude Cowork, Perplexity Computer..

New tools. New features. New startups. New model versions.

Something that didn’t exist last quarter is now considered table stakes.

The result is a peculiar dynamic where:

pilots are obsolete before they launch

vendor evaluation criteria change mid-process

“roadmaps” feel more like historical documents

Planning six months ahead starts to feel optimistic. Planning for twelve months borders on fiction.

What we’ve seen work is far less exciting: Picking use cases that still create value with technology that is already slightly outdated - and accept that you’ll revisit them later.

In other words, build things that survive contact with reality.

2. Many AI deployments need human babysitting

Most AI systems today live in a very specific zone: good enough to impress in a demo, but unreliable enough to require supervision. This is not quite the promise people had in mind.

A typical example is an AI system that finds 43 out of 48 relevant results.

While this is, objectively, remarkable - it is also, practically, unusable. Why? Because someone now needs to find the missing five.

So the “automated” workflow quietly becomes:

AI does the work → human checks everything → AI looks helpful

Now the AI has at least one full-time supervisor, the business case for automation looks a lot weaker than when this AI project kicked off.

3. People can’t describe what they actually want from the AI

Even if the technology were perfect, there is still a constraint: people struggle to explain their own work.

Enterprise workflows are full of unwritten logic - tiny decisions, exceptions, instincts built over years.

Ask someone to document what they do, and you’ll get a clean, confident explanation… that omits half of what actually matters.

In the training that we’ve done, One framing has proven surprisingly effective: treat the AI like a newly hired colleague. Not a genius or a mind reader.

Someone who will do exactly what you say - and also absolutely nothing you meant to say.

4. The capability surface is “jagged”

AI can produce a thoughtful market analysis, but then fail to correctly read a basic PDF.

Ethan Mollick describes this as the “jagged frontier”: strong performance in some areas, inexplicable failure in others.

The bigger problem is that as teams begin to form a mental model of what the AI can and cannot do, i.e. what the frontier is, a new version of the AI model is released - and that mental model becomes incorrect.

So you get a cycle of:

confidence → surprise → adjustment → new release → repeat

While the technology “improves” its predictability does not.

Our workaround is more careful prompting and process design - but even then, consistency remains elusive.

5. Everyone’s solving yesterday’s problems

The default instinct is to apply AI to existing tasks: summarizing reports, drafting emails, speeding up what already exists.

This delivers incremental gains, but is also roughly equivalent to putting your product catalog online as a PDF in 1996 and declaring victory.

It’s technically correct, but strategically underwhelming. And then we wonder why “transformation” hasn’t occurred yet.

The real opportunity lies in redesigning processes entirely. But this requires time, attention, and a willingness to question how things currently work - three resources that are usually already allocated elsewhere in organizations.

----

So what’s actually working?

It’s not the splashy pilots or the “AI transformation initiatives” - the teams seeing real progress are doing something far less impressive (at least on the surface):

They’re mapping workflows.

It looks like this:

Step 1: Identify challenges that are a good fit

Look for areas where humans are already imperfect. If an AI can match human-level accuracy (even if not perfect), that’s often enough to create value. Summarization, first drafts, internal research synthesis - these are areas where “mostly right” is surprisingly useful.

Avoid anything that demands near-perfect accuracy. AI is not yet a good fit for existential decisions.

Step 2: Document everything

Sit with the people doing the work, and watch where time disappears. In our experience, it’s typically:

copying data between systems

reformatting spreadsheets

repeating processes no one has written down

These are not the tasks people put in presentations. They are, however, where most of the work actually happens.

Step 3: Redesign the process, not just the task

Instead of giving someone a chatbot and hoping for the best, build a tool for the workflow - one with guardrails, validation steps, and clear inputs.

This tool should be one component inside a newly designed business process.

Let the AI handle the heavy lifting. Let the process ensure the output is usable. Without that second part, you just get faster ways to produce questionable results.

----

The uncomfortable conclusion

The gap between AI’s potential and its reality is not primarily a technology problem. It’s a design problem.

Yes - the tools are evolving quickly.

Yes - they require oversight.

Yes - their capabilities are inconsistent.

But you can design around these challenges.

From what we are seeing, the organizations making real progress are not necessarily using better models or the latest tools: They're the ones willing to do the boring, unglamorous work of understanding their own workflows at a shockingly minute level of detail to train their AI tools and redesign their business processes

Talk soon,

Dino

ps. If you found this useful, consider forwarding it to a colleague who's asking, “what should we actually do with AI?” It might just save their pilot.